When it comes to software developers, there are a few distinct types. For example, the extroverted, chatty type, who is always going out there to share the latest and newest libraries and projects with everyone, and is very much into bouncing ideas off others, regardless of whether they know what you’re talking about. Then there is the introverted loner, who prefers to tackle programming challenges by bouncing things around inside their own minds and going on long walks to mull things over before committing to anything significant.

This leads to interesting scenarios when it comes to management-enforced ‘optimization’ strategies, like Pair Programming. This approach involves two developers sharing the same computer and keyboard, theoretically doubling the effective output by some kind of metric, but realistically often leading to at least one side feeling pretty miserable and disconnected unless you put two of the chatty types together.

As a certified introverted loner developer, the idea of using an LLM chatbot as a coding assistant naturally triggers unpleasant flashbacks to hours of forced awkward pair ‘programming’. However, maybe using an LLM chatbot could be more pleasant because you can skip the whole awkward socializing bit. In order to give it a shake, I put together a little experiment to see whether LLM-based coding assistants is something that I could come to appreciate, unlike pair programming.

Setting Expectations

Any good experimental setup features clear goals and parameters that define what will be tested and what the expectations are. Obviously I come from a somewhat negative angle into this whole experiment, so to make it easy I’ll be picking two fairly straightforward scenarios for the LLM to assist with:

- C++ embedded coding for STM32 and CMSIS.

- Ada network development.

These are topics that I’m fairly familiar and comfortable with, so that I know what questions I have here, and what I’m roughly expecting as output. I’ll be treating the chatbot for the most part as I would use StackOverflow or nag people on IRC, with my main fear being that it’ll be expecting pleasantries from me instead of brutal and cold professionalism. Ideally it’ll be a step above me hurling profanities at a search engine for clearly willfully misunderstanding what I am looking for.

My expectations are that it’ll have some answers for me for the questions I have about how to do certain aspects of the tasks, and may even produce half-way usable code that I can fairly easily understand and double-check using my usual documentation references.

This just leaves one big question, being which LLM chatbot to pick and how the heck any of it is supposed to work, since I have avoided the things like the proverbial plague.

Meeting the Crew

Although I am aware that everyone who is into using LLM-assisted programming seem to like to promote LLMs like Claude, I’d ideally not be signing up to another service. This pretty much just leaves GitHub Copilot, which I have access to already. I have written about this particular LLM chatbot quite a bit since it was introduced, with my generally negative feelings towards these tools increasingly backed up by research.

Biased I may be, but to be a true scientist you have to be able to set aside your biases for an experiment and accept reality in the face of new evidence. Thus, with all biases and doubts firmly pushed aside in favor of the aforementioned cold professionalism, let’s get down to brass tacks.

Micro Code

My pet project for STM32-related programming has for a while been my Nodate project, involving the use of the CMSIS standard headers and the macros defined therein in order to write things ranging from start-up to running the Dhrystone benchmark and deciphering the various flavors of real-time clocks.

Much of this work entails digging through datasheets, reference manuals and piles of reference code, as well as throwing queries at search engines to see what potentially useful results percolate out of that particular resource. Coming across the trials and tribulations of fellow STM32 developers in forum threads and the like can be both heartening and disheartening, but all of it tends to condense into something that you can use to progress in the project.

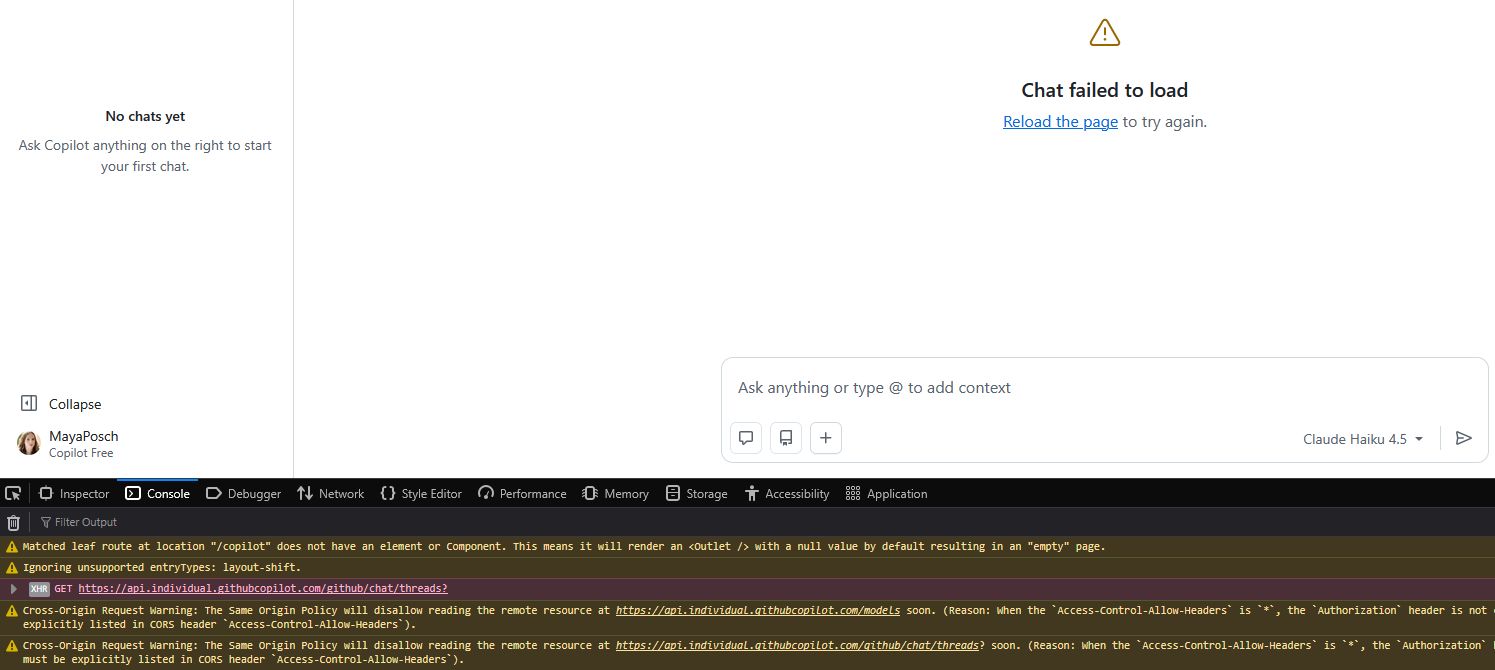

Perhaps ironically, the moment that I tried to use the chatbot in the browser I got an error with the GitHub status page indicating that some of their systems are down, including those for Copilot.

This raises another interesting point: regardless of whether an LLM chatbot makes for a good programming partner, a human partner doesn’t generally randomly keel over or become unresponsive in the midst of trying to do some work together. If they do, however, that’s absolutely a medical emergency and you should call 911, 112, or your local equivalent emergency number stat.

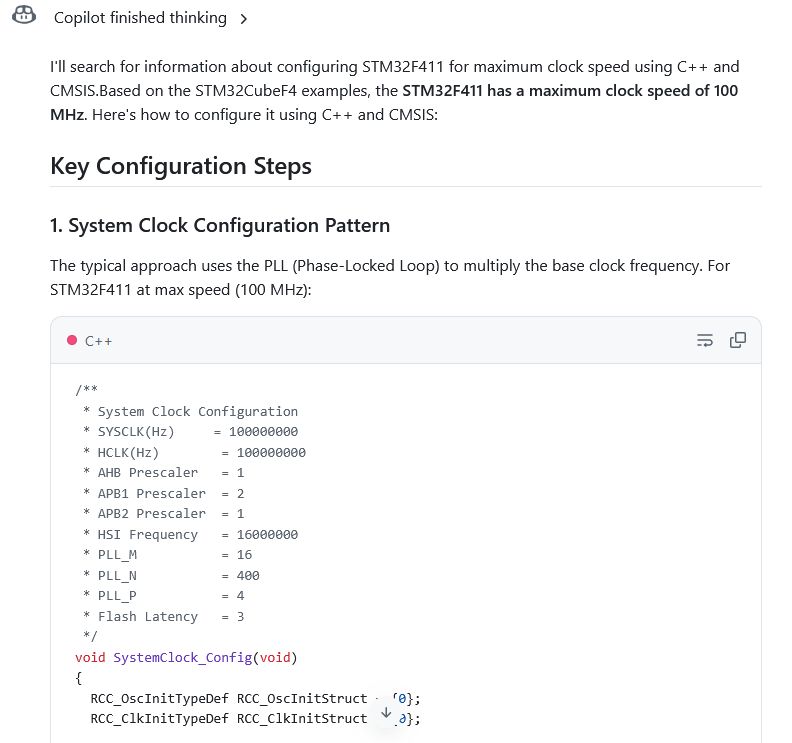

Anyway, after waiting for services to be restored, I was eventually able to ask the chatbot how to properly set the clock speed on an STM32F411 MCU, after getting tripped up previously by the need to set the regulator voltage scaling (VOS) in the power control register (PWR_CR). This is a power saving feature whose adjusting is required for hitting specific and clearly power-wasting clocks.

Shockingly, the chatbot happily spits out ST HAL code and ignores the ‘CMSIS’ bit, although you could maybe argue that the ST HAL uses CMSIS inside. But then so does Arduino code for many MCUs.

To its credit, it does mention in a ‘Key CMSIS Requirements’ list that you need to set PWR_REGULATOR_VOLTAGE_SCALE1 yet without further detail on where to set it. There is also the tiny detail that this isn’t even the CMSIS macro, which would be PWR_CR_VOS to set both bits for the full range.

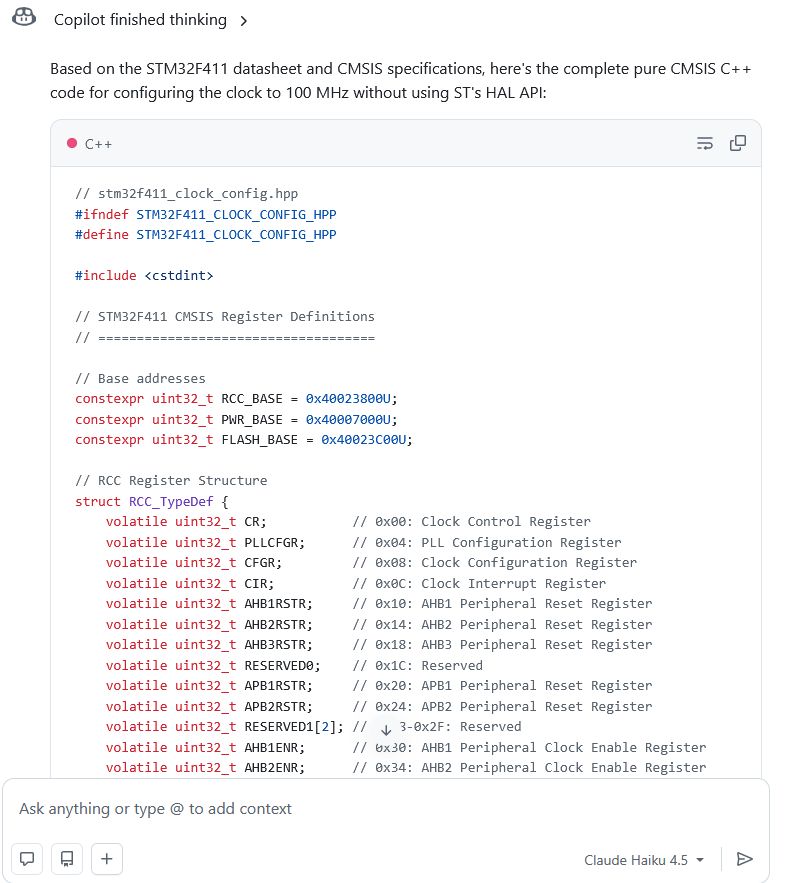

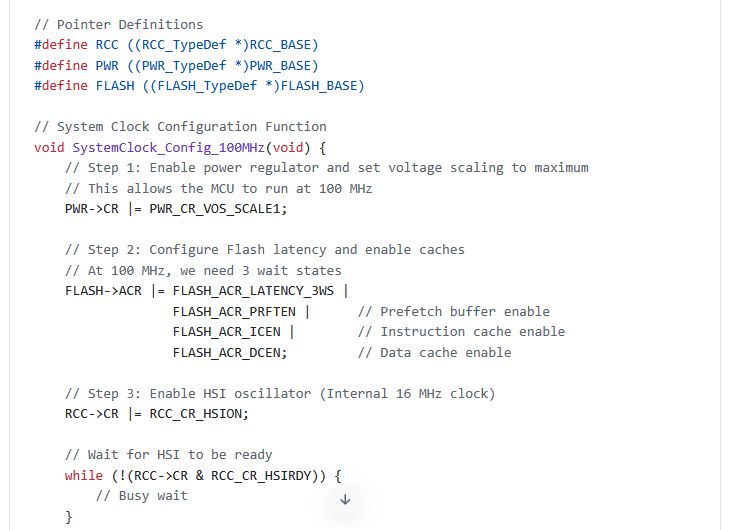

Fortunately we can do the digital equivalent of smacking the chatbot upside the head and tell it to do the thing we asked it to do. This being to provide the real CMSIS version. Doing so results in another gobsmacking moment when it happily spits out code that doesn’t bother to include the CMSIS headers, but simply copies every single used struct definition and more into the code as well, bloating it up massively:

This is of course very annoying when it should have used #define macros, and it clearly can generate include statements based on its inclusion of <cstdint>, but the absolutely deadly sin here is that his code isn’t even functional for an STM32F411, as can be observed here:

I’m not entirely sure where it got the PWR_CR_VOS_SCALE1 thing from, with asking a friendly search engine leading to just a handful of results, one of which is for an STM32F407 that runs at 168 MHz max. This is hilarious in light of the comments right above the code. It makes you wonder what example code it pilfered from.

At this point I could probably continue to pick at this generated code, but suffice it to say that my confidence level in its generated code and overall output hovers somewhere between ‘low’ and ‘bottom of a black hole’. I’m more than happy to flip this particular table, rage quit, and not lose what remains of my sanity.

Findings

Although I had intended to also do some fun porting to Ada together with my buddy Copilot of some C++ networking code in my NymphRPC remote procedure call library, I found my nerves to be sufficiently frayed and the bouts of near-hysterical laughter out of sheer disbelief worrisome enough to abort this attempt.

I also do not feel that it’d do much more than hammer home the point that GitHub Copilot at the very least doesn’t make for a good pair programming partner, nor as a programming tool, or a search engine, or much of anything. When the only thing that it got me was having to check its output for very obvious errors and shaking my head in disbelief when I found them, it beggars belief that anyone would voluntarily use it.

When we also got reports that the use of such LLM chatbots are likely to degrade human cognition and critical thinking skills, not to mention the worrisome prospect of cognitive surrender, then it’s probably best to avoid these chatbots altogether.

I also agree generally with Advait Sarkar et al. in their 2022 paper that you cannot really do pair programming as-such with an LLM chatbot, but that it offers something different. Something that’s very different from using a search engine and digesting various articles and forum posts along with reference material into something new.

Thus, after using an LLM chatbot for some coding ‘assistance’ I’ll be happily scurrying back to my boring references and yelling invectives at search engines.